Play Video

The Game

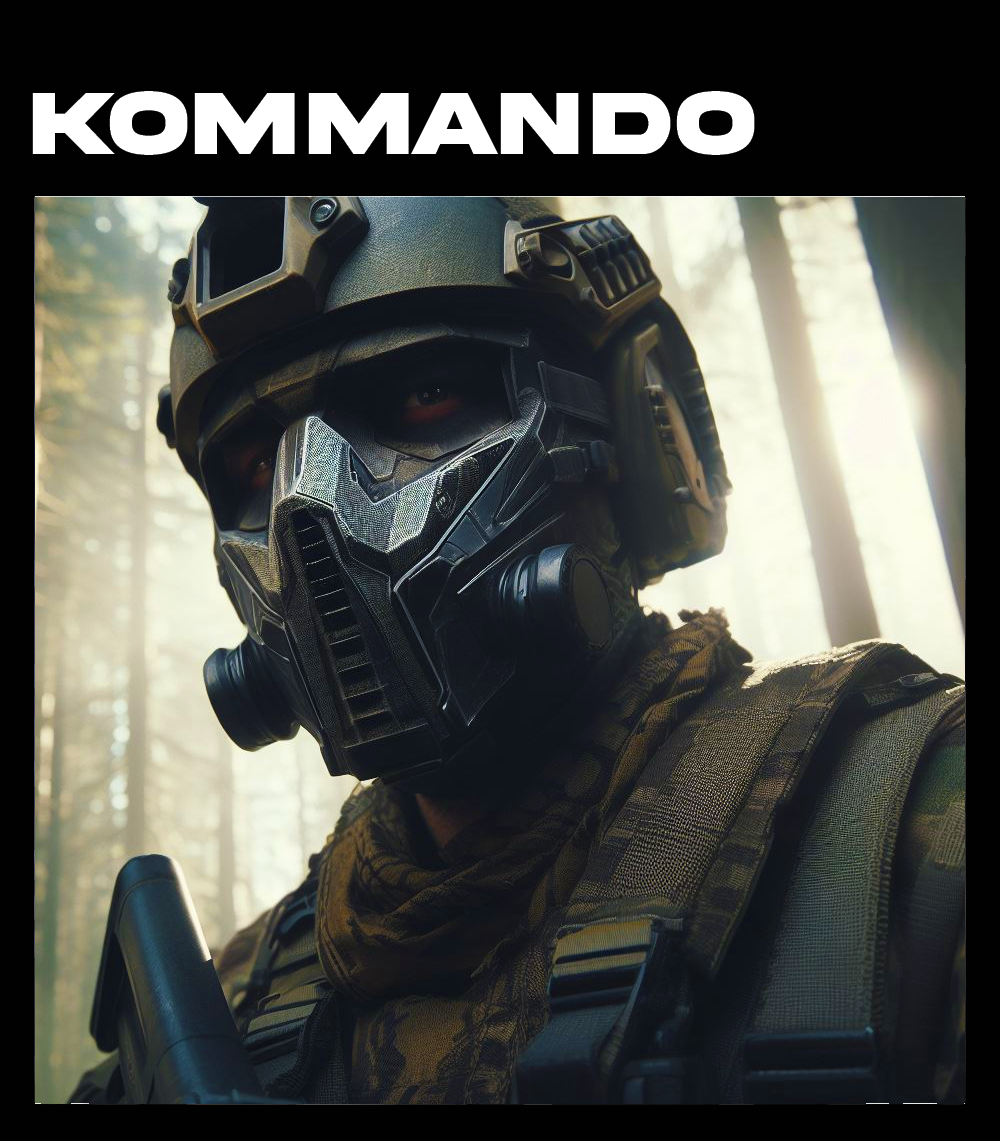

LET THE BATTLE BEGIN

Engage in a live demo with the Kommando developers and discover the system's capabilities firsthand. Schedule your play-through today.

MARKETPLACE

GET MORE WITH THE

GET MORE WITH THE

KOMMANDO ASSET'S

LEGENDARY SKINS

ASSETS

NEW MODES

GAMEPLAY

BECOME A KOMMANDO

ASSETS